Your best engineer just quit. You found out on a Tuesday morning. Their manager is not surprised but also never said anything. HR had no data suggesting this was coming. Now you are six weeks from a product deadline, down a senior person, and about to spend three months recruiting and three more months onboarding someone to replace institutional knowledge that walked out the door.

That sequence is not bad luck. It is a data problem. The signals were there. They always are. Someone going quiet in meetings two months ago. Tenure in the same role crossing a threshold that historically correlates with departure. Compensation drifting below market for their skill set without anyone noticing. A recent manager change that the team absorbed quietly but that measurably shifted the dynamic. None of those signals got seen because nobody was looking at them together, and nobody had a system that would have flagged them even if someone was. That is what machine learning HR actually fixes. Not the hiring. Not the onboarding. The seeing.

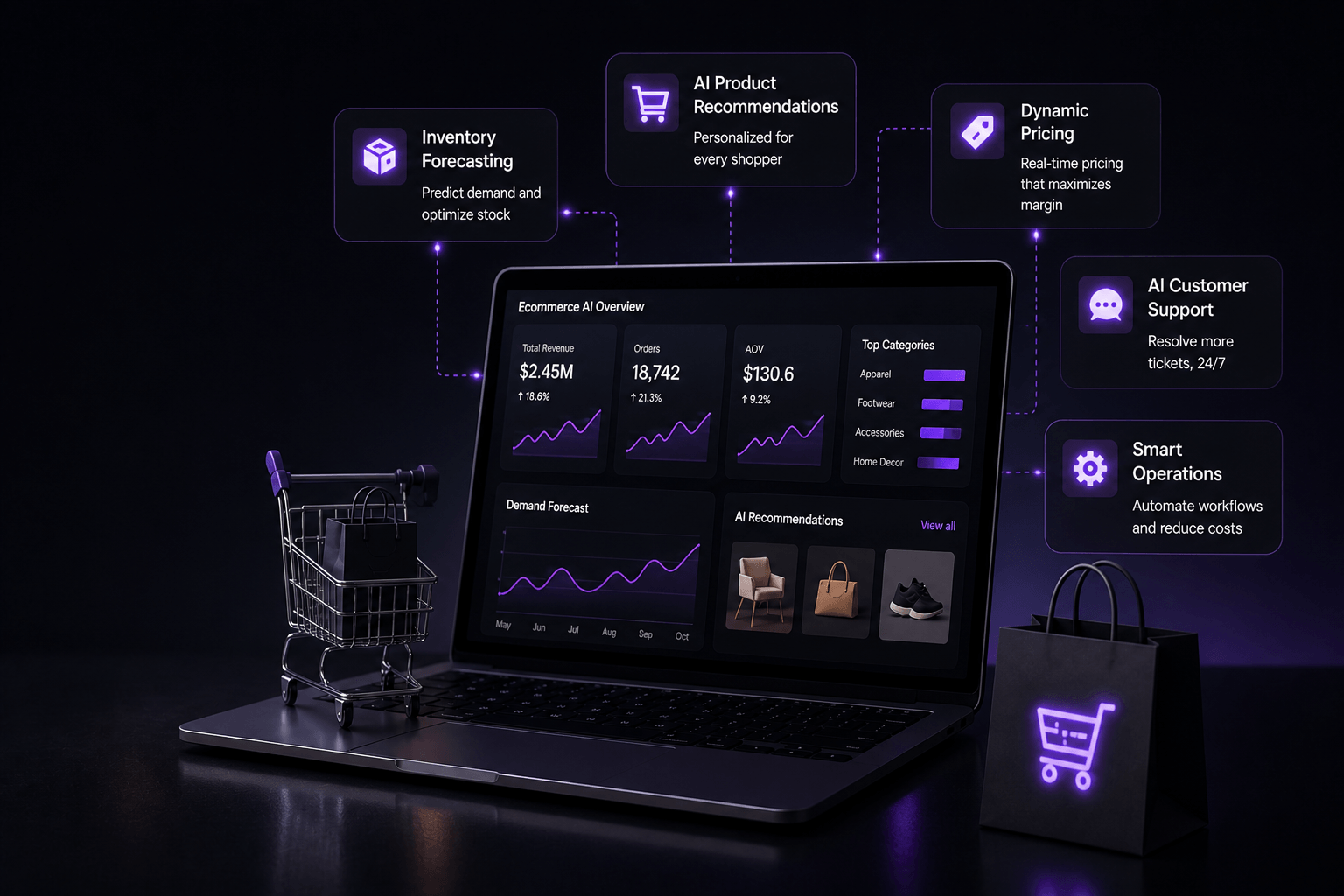

AI in human resources gets talked about mostly in terms of efficiency. Faster hiring. Less admin. Automated workflows. Those things are real and worth having. But the deeper value is visibility into what is happening in your workforce before it becomes a problem you are reacting to instead of preventing. The difference between those two modes is enormous in terms of cost, disruption, and morale. This is a practical guide to where that visibility comes from, what it actually costs to build, and how to start without losing eighteen months inside a transformation initiative that was too big and delivered too little.

I want to be honest about something upfront. AI in human resources is a domain where getting it wrong has consequences that are different in character from most other AI applications. When a demand forecasting model is off, you overstock a warehouse. When an HR AI model is off, real people are affected. Candidates who deserved a conversation did not get one. Employees who needed a development opportunity got flagged as flight risks instead. That asymmetry is worth holding in mind throughout this piece, not as a reason to avoid building these systems but as a reason to build them more carefully than you would build other things.

Decide What Problem You Are Actually Trying to Solve

Before any conversation about AI in HR technology, the question that actually matters is specific: what decision are you currently making badly because you do not have the right information at the right time. Not a general desire to modernize HR. Not a vague sense that competitors are ahead on this. A specific decision with a specific cost attached to getting it wrong.

This sounds obvious but it is the step that most organizations skip. They see a platform demo, get excited about the capabilities, and start building before they have defined what success looks like or which problem they are solving first. The result is a project that touches everything at the surface level and goes deep on nothing, which means nothing works well enough to trust and nobody uses it.

For most organizations the real candidates are one of three things. You are losing people you did not expect to lose and by the time you find out they have already decided. That is an employee retention AI and predictive attrition problem. You are taking too long to hire, or your hiring quality is inconsistent across different managers or locations, or you keep losing candidates to faster-moving competitors. That is an AI recruitment and AI candidate screening problem. Or your HR team is spending the majority of their time on work that does not require their expertise, and the strategic work keeps getting deprioritized. That is an HR automation and HR chatbot AI problem.

Each of these has a different data requirement, a different build approach, and a different ROI timeline. The attrition problem requires years of historical HRIS data and enough resignation events to train a meaningful model. The hiring and workforce management AI problem requires clean applicant tracking data and a way to connect candidate attributes to post-hire performance outcomes. The capacity problem requires a well-organized HR knowledge base and a clear map of which workflows are consuming the most time.

Treating all of these as one thing called HR AI is how organizations end up with a large budget commitment, a multi-year roadmap, and nothing actually working because everything was built at the same level of depth rather than one thing being built properly. Pick the one that costs the most right now. Build that. Measure it honestly. Then decide what comes next based on what you learned rather than what the original roadmap said before you knew anything.

If Your Problem Is Retention: What Predictive Attrition Actually Looks Like

Most HR leaders already know which teams have retention problems. What they do not know is which specific people are about to leave, how much time they have before those people have mentally committed to going, and what is actually driving it. That is the gap predictive attrition fills and the reason employee retention AI built on good data is consistently one of the highest-ROI applications in the entire HR technology landscape.

The model works by learning what patterns in your own historical data have preceded voluntary resignations. Not generic industry benchmarks. Not signals that predict attrition at some other company with a different culture and a different workforce profile. Your data. Your organization. The patterns that have actually predicted departures in your specific context over the past three to five years. That specificity is what separates a model that produces interesting reports from one that a manager will actually act on.

Common predictors that show up consistently include tenure in the same role crossing a threshold that is specific to your organization, compensation percentile dropping relative to current market rates for the skill set, a recent manager change in teams that historically see turnover after leadership transitions, completion of a high-stakes project that was a primary engagement anchor for someone who joined specifically to work on it, declining participation in internal meetings or collaboration tools, and the absence of any substantive career development conversation in the preceding six months. None of these signals alone is meaningful. The model learns how they combine in your specific organizational context to indicate elevated departure risk.

The output is a risk score that surfaces to the right manager or HR business partner at the right time with enough context to understand what might be driving it. Not a list of every employee above a certain score. A prioritized, actionable view of who warrants a direct conversation now, before the decision to leave has hardened into a resignation. What happens in that conversation is entirely human. The AI identifies the timing and provides the context. A person decides what to do with it.

What I want to be clear about is that the value of this system is entirely dependent on what happens downstream of the score. A model that tells a manager someone is at risk and then nothing changes is not just worthless. It actively erodes trust in the HR function because people can sometimes sense when they have been evaluated and nothing followed. The organizations getting real retention improvement from people analytics AI have built an intervention protocol alongside the model. When a risk score crosses a threshold, a specific action happens. A conversation is scheduled. A compensation review is triggered. A development discussion is added to the next one-on-one agenda. The AI surfaces the moment. The humans make it mean something.

The data foundation for this is an HRIS with at least three years of clean history, reasonably consistent recording of resignation events that distinguishes voluntary from involuntary departure, and employee attribute data that is complete enough to be useful as features. Most mid-size organizations have this data sitting in their systems unused because nobody has connected it and modeled it. Getting it into usable shape is the real work. It is not glamorous. But it is what makes everything else possible.

If Your Problem Is Hiring: Speed Versus Quality and Why You Have to Choose

AI recruitment conversations almost always start with speed. Time to fill is too long. The recruiting team is buried under application volume. Hiring managers are waiting weeks for shortlists. Those are real problems and machine learning recruitment tools genuinely address them. But before optimizing for speed it is worth being honest about what you are actually optimizing toward, because fast hiring of the wrong people is considerably worse than slow hiring of the right ones.

The most valuable thing AI hiring tools do operationally is not screen faster. It is screen more consistently. Human reviewers applying the same stated criteria to the same candidates reach different conclusions depending on how many resumes they have read that day, how similar a candidate is to someone they hired before who worked out well, what time it is, how tired they are, and dozens of other factors that have nothing to do with whether the candidate can do the job. AI candidate screening applies the same criteria with the same weight every time across every application without the attention degradation that sets in for a human reviewer somewhere around resume fifty.

Done well, this actually broadens access. Candidates who would have been filtered out by keyword matching because their experience does not use the exact vocabulary in the job description, but who have the underlying attributes that predict success in the role, surface in the shortlist. The model identifies pattern matches that a tired human reviewer scanning for specific phrases would miss. That is a genuine improvement for both the company and the candidates.

Done badly, with a model trained on historical hiring data from a pool that was not diverse to begin with, it reproduces and amplifies historical bias at a scale that no individual human reviewer could achieve. A model that learned to deprioritize certain universities because historically fewer hires came from them will continue doing so even if that pattern reflected past bias rather than genuine performance prediction. This is not a hypothetical. It has happened at real companies with real consequences. Any AI talent acquisition implementation needs ongoing outcome monitoring across demographic groups built into the operating model from day one, not added as a compliance activity after something surfaces.

Workforce planning AI connects to this in a way that most companies have not fully thought through. Knowing that a function needs three senior engineers in six months, and knowing that your historical time to fill for senior engineering roles is fourteen weeks including ramp to productivity, means the recruiting motion needs to start now, before the headcount is approved in the budget cycle. Machine learning workforce models that connect rolling business projections to hiring lead times and onboarding ramp periods create a proactive recruiting posture rather than a reactive one. That shift alone changes the quality and cost of hiring considerably.

The third thing AI talent acquisition does well is sourcing. Instead of waiting for applications to arrive through job postings, machine learning models can proactively identify passive candidates in external talent databases who match the profile of your historically successful hires and flag them for targeted outreach. This changes the recruiting dynamic from inbound-dependent to proactive, which matters significantly in competitive talent markets where the best candidates are rarely actively looking and are evaluating multiple opportunities simultaneously. The companies that can reach them first with a relevant message have a structural advantage that is hard to replicate without this kind of tooling.

If Your Problem Is Capacity: What HR Automation Actually Buys You

Ask any HR team honestly where their time goes and a significant portion of the answer will be work that does not require HR expertise, judgment, or relationships. Answering the same fifteen policy questions on rotation. Manually routing leave requests through an approval chain that could be automated. Chasing managers for overdue performance review completions two weeks past the deadline. Reminding employees that benefits enrollment closes in three days. Processing the administrative paperwork that accompanies every single hire and every single departure. This work is necessary. It is also completely unsuited to the skills that good HR professionals have and was hired for.

HR chatbot AI handles the high-volume, low-complexity query category well when it is implemented on top of accurate, well-organized documentation. An intelligent system that can answer questions about leave balances, benefits options, reimbursement processes, parental leave policies, and onboarding timelines accurately and instantly is not replacing HR. It is eliminating the work that was consuming HR capacity without adding strategic value, so that the actual HR professionals can spend their time on situations that genuinely require their judgment, their relationships, and their understanding of the people and the business.

The honest caveat here is that the value of HR chatbot AI is exactly proportional to the quality of the knowledge base underneath it. A chatbot trained on accurate, current, consistently formatted HR documentation is a genuine capacity multiplier that employees find more convenient than waiting for a reply. A chatbot trained on a mix of outdated policy documents, inconsistent formatting, and content that was never designed to be machine-readable gives confidently wrong answers to employees who needed the right ones. The content and documentation work that precedes the AI deployment is not glamorous and does not get announced in a press release but it is where the value actually lives.

HR automation handles the workflow layer that sits below the conversational interface. Leave requests routing to the right approvers automatically based on who the employee reports to and what type of leave it is. Onboarding checklists triggering across IT provisioning, facilities access, benefits enrollment, manager introductions, and compliance training, tracking completion in real time without someone manually chasing each workstream. Offboarding processes completing consistently and completely without anything falling through the gaps between departments. AI payroll automation reducing error rates and processing time in payroll operations. These are operational improvements with straightforward ROI calculations and lower implementation risk than the applications that touch hiring decisions or performance evaluation.

AI onboarding deserves specific attention because the value compounds in ways that are easy to underestimate. How a new employee experiences their first ninety days has a well-documented and significant effect on both how long they stay and how quickly they reach full productivity. Intelligent HR systems that adapt onboarding content delivery based on role and prior experience, send automated check-ins that surface concerns early, match new hires with relevant internal contacts and communities based on their profile and interests, and provide instant answers to the hundred operational questions that every new employee has without requiring a human to stop what they are doing to respond, produce a meaningfully better first experience. That better experience pays back in retention, engagement, and time to productivity over months and years in ways that are genuinely measurable.

The NLP Layer Most HR Teams Have Not Touched Yet

A significant portion of the most valuable data in any HR function is text that nobody has been able to analyze systematically at scale. Performance review narratives written by managers every cycle. Exit interview transcripts collected diligently and then filed. Engagement survey open-ended responses that employees spend time writing carefully. Recruiter notes on candidates that capture qualitative judgment that never makes it into a structured field. Grievance documentation. Job description language that has evolved organically across departments without any consistency.

You can read a hundred exit interviews and form impressions. You cannot read ten thousand and identify statistically meaningful patterns across teams, tenure bands, manager relationships, and time periods without machine learning. NLP HR applications are what make that analysis possible at the scale that produces insight rather than anecdote.

Natural language processing applied to performance review text can identify whether evaluation language varies systematically across demographic groups in ways that would not be visible from numeric ratings alone. A manager who consistently uses words associated with potential and leadership when writing about certain employees and words associated with execution and reliability when writing about others may be producing numeric ratings that look similar while embedding meaningful bias into the written record that affects promotion decisions downstream. This kind of analysis at scale across thousands of reviews is not possible without NLP HR tooling.

Applied to exit interview data, NLP can surface the actual themes driving attrition across different parts of the organization rather than the curated version that appears in manager-aggregated surveys. When employees leave they are often more candid in writing than they are in the final conversation with their manager. Systematic analysis of what they actually say, at scale, produces a more accurate picture of what is driving turnover than any manually compiled summary.

People analytics AI that incorporates both structured behavioral and transactional data and unstructured text from surveys and reviews is more predictive than people analytics AI working from structured data alone. The combination gives the model a richer picture of what is happening with employees and produces recommendations that are more specific and more actionable than either data source could support independently.

AI employee engagement built on the NLP layer changes the feedback cadence from periodic to continuous. Instead of waiting for annual or quarterly survey results, aggregate sentiment analysis across communication and survey data provides an ongoing signal that surfaces changes in team mood or organizational climate in time to respond. The application here is not monitoring individual employees. It is understanding at an organizational level whether engagement is moving in the right direction and what specifically is driving the movement. That is a different and considerably more useful thing than a quarterly score on a dashboard.

AI Performance Management: What Works and What Backfires

The annual performance review is widely understood to be a poor feedback instrument. By the time it happens the most relevant events from the first half of the year are eight months old. Recency bias pulls both managers and employees toward the last sixty days. The conversation is complicated by its proximity to compensation decisions in ways that make honest developmental dialogue harder. And yet most organizations still structure their entire performance management process around this cycle because changing it requires organizational will that is hard to sustain through competing priorities.

AI performance management does not fix the annual review problem by itself. But it does change what is possible within and around it. Machine learning HR applied to performance can make feedback more frequent and more specific by surfacing coaching opportunities in real time rather than waiting for a cycle. It can help calibrate ratings across managers who demonstrably apply different standards in ways that make ratings meaningless for cross-functional comparison. It can connect development investments to actual performance trajectory over time so that the organization can understand which interventions work rather than just which programs exist.

HR data analytics in the performance context shifts from descriptive to prescriptive. Not just a dashboard showing what performance looked like last quarter but a system that can identify early indicators of high potential in employees who are not yet visible to senior leadership, flag teams where performance is trending down before the decline shows up in results, and help managers understand which specific development actions have historically moved the needle for employees in similar roles at similar tenure levels.

Where AI performance management goes wrong, and it goes wrong at a meaningful number of companies, is when the data collection starts to feel like surveillance rather than support. If employees come to believe that every meeting they attend, every message they send, every project interaction, and every collaboration tool activity is being logged and fed into a system that produces a score which influences their career trajectory, they change their behavior in ways that optimize the metrics being measured rather than the actual work. They attend meetings they do not need to attend. They communicate through logged channels rather than efficient informal ones. They prioritize visible activity over genuinely productive work. That behavioral distortion is worse for performance than the absence of any AI system at all.

The organizations doing AI performance management well have invested heavily in being transparent with employees about exactly what is being measured, exactly what it is used for, what decisions it does and does not influence, and what avenues exist for employees to contest or contextualize a data point they believe is misleading. That transparency is not a legal compliance activity. It is the thing that determines whether the system builds trust in the fairness of performance decisions or destroys the psychological safety that good performance depends on in the first place.

The Data Conversation You Need to Have Before Any of This

HR data is almost always more fragmented than it appears from the outside, and frequently more fragmented than HR leaders themselves realize until they start trying to do something with it. The HRIS has some of it. The performance management system, which may or may not be the same platform, has some of it formatted differently. The applicant tracking system has the recruiting history going back however many years since the last platform migration, which erased everything before it. The learning management system has training completion data. The engagement survey platform sits in its own database that has never been connected to anything else. Payroll runs on something separate from all of them. None of these were designed to talk to each other. None of the data was collected with machine learning applications in mind.

Before any meaningful machine learning HR system can be built and trusted, there is data infrastructure work that has to happen. This is the part that AI vendors tend to mention briefly before moving on to the demo. It is also the part that determines whether the project succeeds or produces six months of expensive work and a model that nobody uses because nobody trusts the output.

The questions worth answering honestly before you commit: How many years of clean HRIS data do you actually have, accounting for platform migrations that may have disrupted continuity. Are resignation events recorded consistently and in a way that reliably distinguishes voluntary from involuntary departure, because a model trained on data that conflates the two will produce predictions that are wrong in systematic ways. Is compensation data current and consistently formatted across the organization, or do different business units record it differently in ways that make cross-organizational comparison unreliable. Are performance ratings truly comparable across managers and business units or do the distributions vary so significantly that a four from one manager and a four from another manager mean completely different things about the underlying employee.

The honest answer to these questions at most organizations is that the data is partial, inconsistent in places, and will require real remediation work before it can support the models being proposed. That is not a reason not to proceed. It is a reason to build the data work into the project plan rather than discovering it as a blocker six months in. Getting the data foundation right is not glamorous. It does not feature in vendor demos. But it is what separates HR AI implementations that deliver real and lasting value from the ones that produce impressive presentations and disappointing production performance.

At Resourcifi AI we start every HR AI engagement with a data readiness assessment before we write a line of model code. Not because we want to slow things down. Because we have seen enough projects fail at the data layer to know that skipping this step does not make the project faster. It makes it longer, more expensive, and more likely to end with a system that the organization abandons rather than scales.

What to Actually Do Next

If the opening scenario resonated, the most useful next step is not an RFP for HR AI platforms or a vendor shortlist. It is a data audit focused specifically on attrition. What resignation data do you have and how far back does it go. Are the events recorded consistently. What employee attributes are captured at the time of departure versus reconstructed from other sources. What is the ratio of voluntary to involuntary departure in your records. The answers to these questions tell you whether a predictive attrition model is buildable with your current data or whether there is foundational work to do first.

If the hiring scenario resonated more, the equivalent first step is an honest analysis of your historical hiring data. Which roles have the highest variance in post-hire performance outcomes. What attributes were captured about candidates at the time of hire. Is there a way to connect candidate data to performance data in a way that the privacy and consent framework at your organization supports. Is your ATS data clean enough to be modeled or has it accumulated inconsistencies across years of use by different recruiters applying different conventions.

If the capacity scenario resonated most directly, the first step is simpler than the other two. Map the ten most frequent HR queries and workflows by volume over the past quarter. The ones at the top of that list are the first and best candidates for HR chatbot AI and HR automation. Build those first, measure the time saved and the accuracy of the responses, and use that foundation of demonstrated value to expand rather than starting with everything at once.

The pattern across all three starting points is the same. Start with the specific, not the general. Define what success looks like before you start building. Measure the right outcomes, not just adoption metrics. And be honest with the organization about what the AI is doing and what it is not doing, because the trust that makes people act on AI outputs is built through transparency and eroded through ambiguity.

HR AI that is built carefully, deployed transparently, and measured honestly does produce real results. Attrition that would have happened becomes retention because a conversation happened at the right time. Hiring that was inconsistent becomes more predictable because the screening criteria are applied uniformly. HR capacity that was consumed by administrative work gets redirected toward the strategic and relational work that actually moves the business forward.

At Resourcifi AI we build these systems across the full spectrum of HR applications. Predictive attrition and employee retention AI. AI recruitment and workforce planning AI. NLP HR applications for performance and engagement analysis. HR automation and intelligent HR systems for the operational layer. And we start every engagement with the data conversation rather than skipping it, because that is the only way to build something that performs in production rather than just in the demo. If you want to work through where your organization actually is on data readiness and what is realistically buildable from there, that is a conversation we are happy to have.